I had the same issue and resolved it turning off Auto Fan settings. Try it and let me know.

Hi. Unfortunately no, I have AutoFAN off…

Any luck? Same issue here, tried the Suprim bios and swapped memory thermal pads

Hi there, not yet. I’ve seen other foruns talking about bugs in the nvidia drivers for this card.

I have no idea how to fix this, I’ll apply thermal pads for the junction and other chips around, just to make sure the vbios is not powerlimiting for some crazy reasons. “nvidia-smi -q” doesn’t show any limiting, so this shouldn’t be a thermal issue…

I have this problem also It just wont go above 209w in HiveOS. In windows using afterburner the card sits nicely at 99mh/s with 92 mem temp.

If you look in windows HWInfo you can see that one of the 8pins is pulling 100% 150W and that is the limiting factor. The Suprim X bios can negate it IN Windows with an increased limit making it possible to draw like 160W from this pin and get proper ~100MH/s.

Unfortunately, it does not work in HiveOS. In HiveOS I and some other users found out that you can flash other bios like EVGA FTW or Gigabyte Aorus Xtreme and the card is capable of hashing normally. It will mess up sensor readings tho. Mine is now set to 304PL and is doing just fine. The power draw from the wall is normal even with that PL set as that is just software reading.

This is some kind of BIOS limitation on this Gaming X. Kinda sad as Suprim and even Vision are not affected

Hi Delgon, thanks for the update, finally a solution. Don’t mind if I ask a few questions:

- Have you tested on another Linux system, like rave?

- Have you replaced thermal pads?

- Can you specify a BIOS for us to test? Black, Ultra, XC3, …?

It’s really strange if it’s a vbios issue but only affected in hive… It should be an issue with all linux drivers, for example…

Nope, I did not test on any other Linux distro. It is possible that it can be a driver issue but if it was, why is it also present in windows? I think Linux driver still limits power draw from components like 8pin to the specification of 150W but in Windows increased Power limit is able to overwrite it, like I said, it can draw above 160W if you set power limit with Suprim X bios to like 105+%

After BIOS flash I replaced thermalpads. Used Grizzly 8 minus ones and I also added some more Noctua thermal paste to the mini metal plate that connects Memory modules → Pads → Metal Plate → Paste → Main heatsing (who thought of this design!? total bs imo)

I tried around 20 different bioses, I took them from techpowerup.com. I tried All MSI ones, Suprim X only worked in Windows. Do not remember all of them but I know that those work in HiveOS

Gigabyte AORUS Extreme: 94.02.26.88.26

EVGA FTW3: 94.02.26.48.68

EVGA FTW3: 94.02.26.48.88

It is possible that other FTW3 and Aorus Extreme BIOSes also work. At first, I thought that only the ones with like 400W+ will solve the problem but Suprim X also has that upper limit but is still limited

I’m not confident about flashing all 90 that are on the site to make sure which one work but it might be a nice place to start.

Beware, some things are weird if you flash those BIOSes and look in the HWInfo in Windows. It shows just 2 8pins iitc instead of 3. I’m not sure if it will have any negative impact in the future but for now It hashes just fine. It is a little bit lower compared to my other 3080 (if I set them to the same memory OC) by I do not know what it could mean.

Thanks for this, I will apply pads and try in both MSI cards this weekend. I’ll post some screenshots here after it.

Can you post a screenshot of your Hive dashboard, to see hashrate, fan, core, mem, PL settings?

Thanks again!

Phk

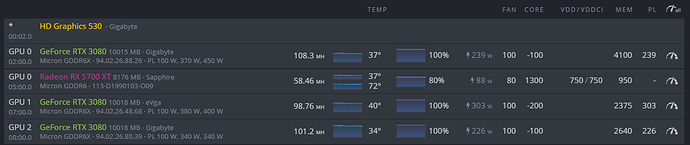

The 3rd card that is shown as EVGA is the card in question. Well, bios flash also changed it as one would expect

As you can see I use 94.02.26.48.68 BIOS.

As you can see I use 94.02.26.48.68 BIOS.Everything except PL works ± the same way. I found out that around 305 ± a few is where you wanna be with it. Do not worry, it is not pulling 305W from the wall and it is similar to my other 2 cards.

For the 3080s I use 100% fan only to keep mem temps as low as possible, they get kinda hot for me even with replaced pads. I did not replace backplate pads on this MSI tho and left what was there by default.

Let me just share this. Ignore the starting post, and see the post I have linked here:

www .reddit.com/r/gpumining/comments/koiuvb/msi_rtx_3080_gaming_x_trio_ethereum_mining_fix/gmrq9b2/

It mentions exactly the same issue and solution. Also, it has a great table for measuring, on the wall, the power drawn by GPU:

| Sensor Reported Watts | Actual Watts |

|---|---|

| 200 | 138 |

| 220 | 153 |

| 240 | 170 |

| 260 | 185 |

| 270 | 195 |

| 280 | 201 |

| 290 | 213 |

| 295 | 215 |

| 300 | 218 |

| 305 | 224 |

| 310 | 227 |

| 315 | 232 |

| 317 | 235 |

| 320 | 240 |

| 380 | 280 |

Hi there,

I wasn’t able (yet) to pad the cards and try the EVGA vbios hack, but I noticed something totally strange.

Try setting the “intensity” to exactly 92%, and the Power gets unlocked

In my case it does not compensate over the 8%, but you can try it. Somehow the card unlocks the power consumption, and you can tweak the mems. Haven’t tried it enough though, I need some good pads and thermal paste for the GPU. Any recommendation for pads or paste is welcome.

Cheers

Hi All,

I have my findings listed in the following post, hope it helps!

How can check manage memory temps with HiveOS? Been looking for this info like crazy.

I think it only works under windows with gpu-z.

I don’t understand your core CPU settings. Why +1155 instead of for example -200 as usual ?

Even on windows, don’t you “underclock” the CPU?

It’s a really good question which everyone asks around, but I still don’t know yet

or HWInfo! works ok.

Well, everyone has their own ways of overclocking and undervolting their hardware. If you do a -200 for example you are setting the core clock to whatever he driver or the card decides and reduce it by 200. When you set it to a clock like in my case 1155 have os will set the core clock to 1155Mhz which means its static and won’t change. I personally thinks this helps with a very stable hashrate and thats why i don it but it may be different for different cards.

If one undervolts a card and the card decides to lower the core clock or when it fluctuates a fair that can result in invalid shares when one sets it to as specific core it just ensures the core is not going to draw more power and that leaves the memory to be in a stable state incase the core draws too much because of a boost which can lead to heating and in that case vram will not have to compensate for the power deficiency.

I am not very good at explaining but if you think of the power as a pool and have two resouces using it the that being the core and VRAM one draws too much performance on the other will to degrade.

Now this only works if you know what is the best value for your hardware, set the core clock to low and you will loose hashrate and increase your power usage set to high and it will not have much effect if its not possible to reach in the set power limit.

From my experience anything above 189 in hiveOS has no effect unless you set it to above the minimum of your core clock and then it sets your core clock as static.

Not sure if i explained it good enough but this is all based on my experience and knowledge that i accumulated over the past few months.