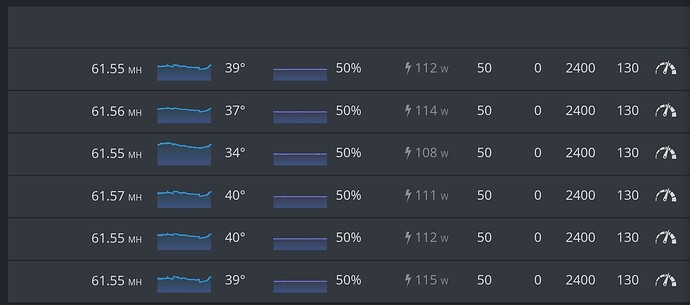

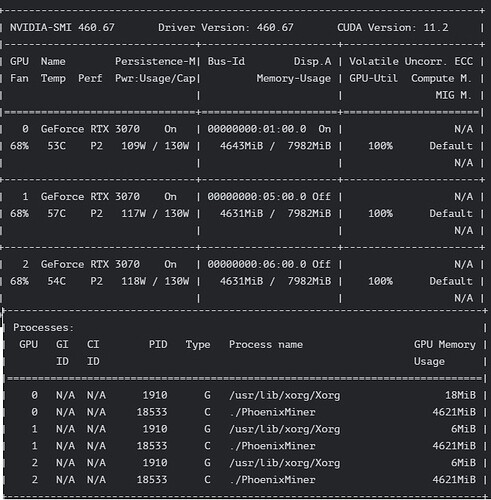

Effective GPU clocks = lgc value - offset value

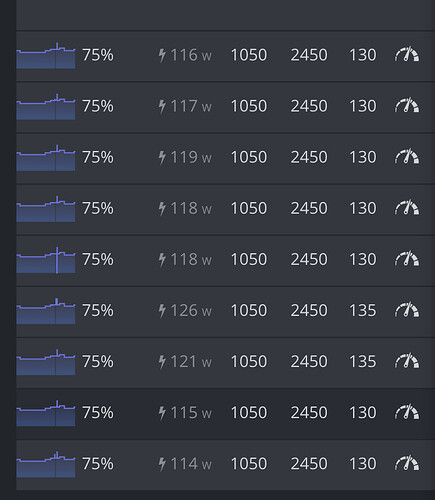

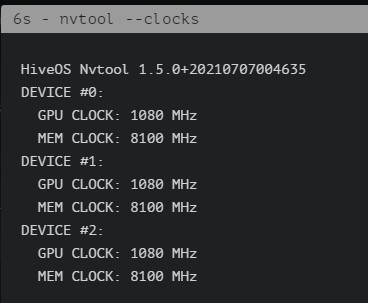

In you first case Effective clocks = 750 - (-500) = 1250MHz, which is too much for 3070 and too low for 3060ti. For 3070 you shold aquire better results around 1080MHz effective GPU clock and for 3060ti - around 1425. But every silicon is different so YMMV.

When you enter 750 in GUI your offset =0, so

effective clock = 750 - 0 = 750 Mhz, hence lower hashrate.

It is strange, but setting offset -400 and lgc 680 gives 3-5W lower power consumption and the same hashrate compared to offset 0 and lgc 1080 (entering 1080 directly in the gui).

I’m suspecting that software wattmeter could be not correct in that specific case. I plan to test it with hardware wattmeter. It could be there is no difference in real power consumpion. Will check that theory when I get my hardware Kill-a-watt.