Hello Fellow Miners,

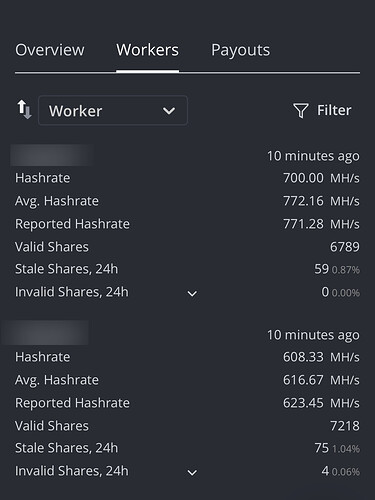

Rig 1 has 8x Gigabutt RTX 3080 cards averaging 772 MH/s while netting 6789 shares per 24hr. Rig 2 has 4x 3060Ti and 4x RTX 3080 cards averaging 616 MH/s and while netting 7218 per 24hr. I am shocked that Rig 2 is netting 400 more shares per day using 150 MH/s less than my full 8x RTX 3080 power hog rig. I initially thought my OC settings/timings may have been responsible so I dialed back the OC on the full 3080 rig, without success. Rig 2 is still netting more shares than rig 1. Rig 1 is full of Gigabutt 3080s, which were known to throttle without thermal pads. Makes me wonder if these cards really underperform in comparison to other brands.

I assumed MH/s speed and reduced invalid/stale shares dictated share success. Perhaps that is not entirely the case. I am not sure where to start to troubleshoot the bizarre results. Everything is hardwired to a router. No wifi at all or switch in line. Maybe Hiveon’s worker specs are not precisely reporting values? Maybe Gigabutt’s 3080s are really POS in every way? Any feedback would be appreciated. Full specs below:

I have 2 8x GPU rigs. Both rigs are running HiveOS and mining on the Hive pool. Each rig has a ASRock h110 pro btc+ mobo, I5 7500 3.4ghz CPU, 8gb using 2x 4gb Patriot DDR4 2400 PC4-19200 memory, GPU Risers from GPURisers.com, 2x EVGA 1600w T2 (more than enough to safely power 4x 3080s per PSU, SSD and 7 120mm supply fans.

Rig 1 is populated with 8x Gigabyte Nvidia RTX 3080 OC cards that have thermal pads installed to avert thermal throttling, since Gigabutt did a terrible job using subpar to nonexistent materials for heat dispersion for the DDR6 memory.

Rig 2 has a mix of 4x RTX 3080 cards and 4x 3060Ti cards of varying brands.

Hiveos 5.4.80

Nvidia driver: 455.45.01